Production-Ready RAG Q&A System

تفاصيل العمل

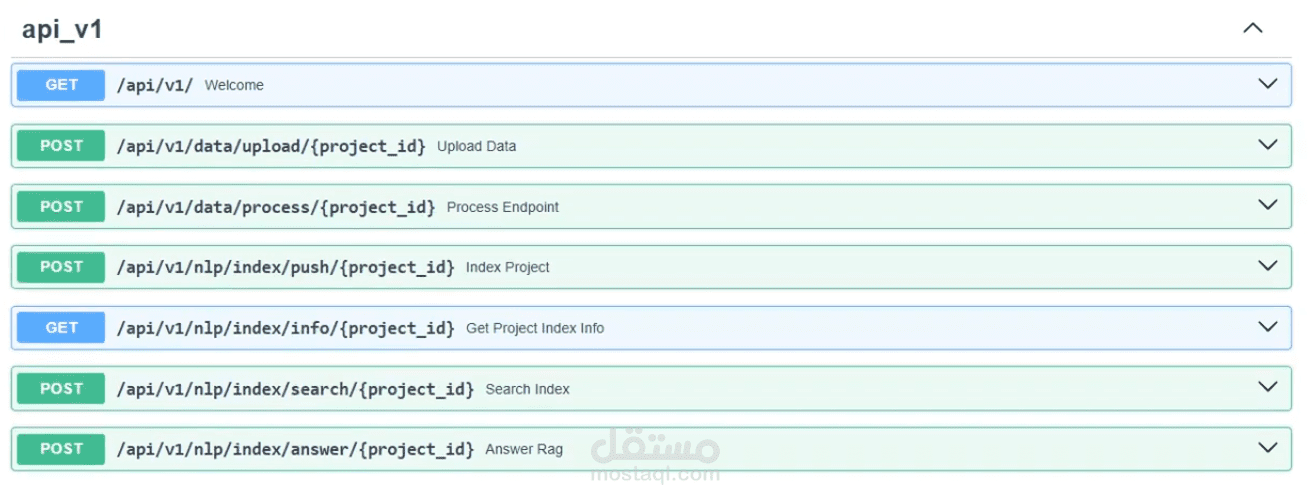

A fully containerized Retrieval-Augmented Generation (RAG) system built for production environments. Users can upload documents and organize them into separate projects by topic (e.g. Health, Business, Legal) — each project maintains its own isolated knowledge base. Users can then query any project through a RESTful API and receive accurate, context-aware answers powered by LLMs.

Key highlights:

- Multi-project document management — upload and organize documents by topic, each with its own isolated vector store

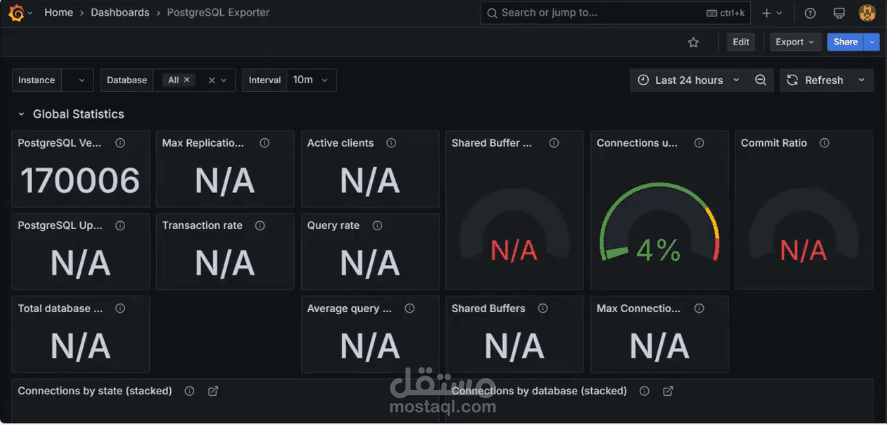

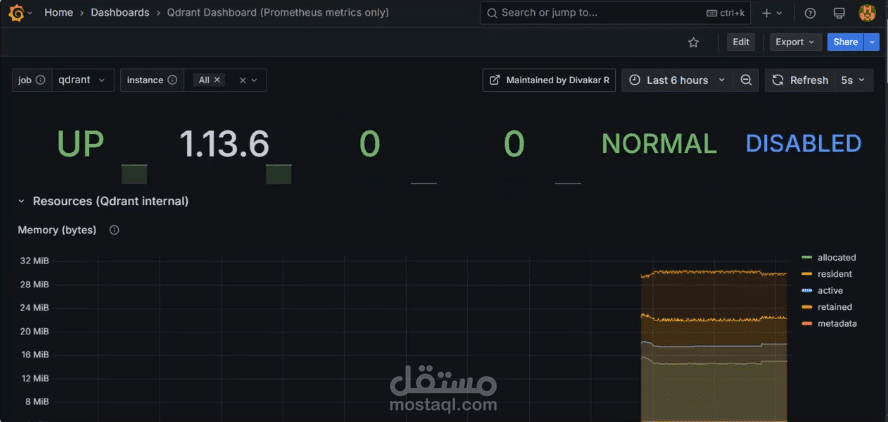

- Semantic retrieval using LangChain + Qdrant / PGVector (PostgreSQL)

- Cohere embeddings + LLM integration for accurate answer generation

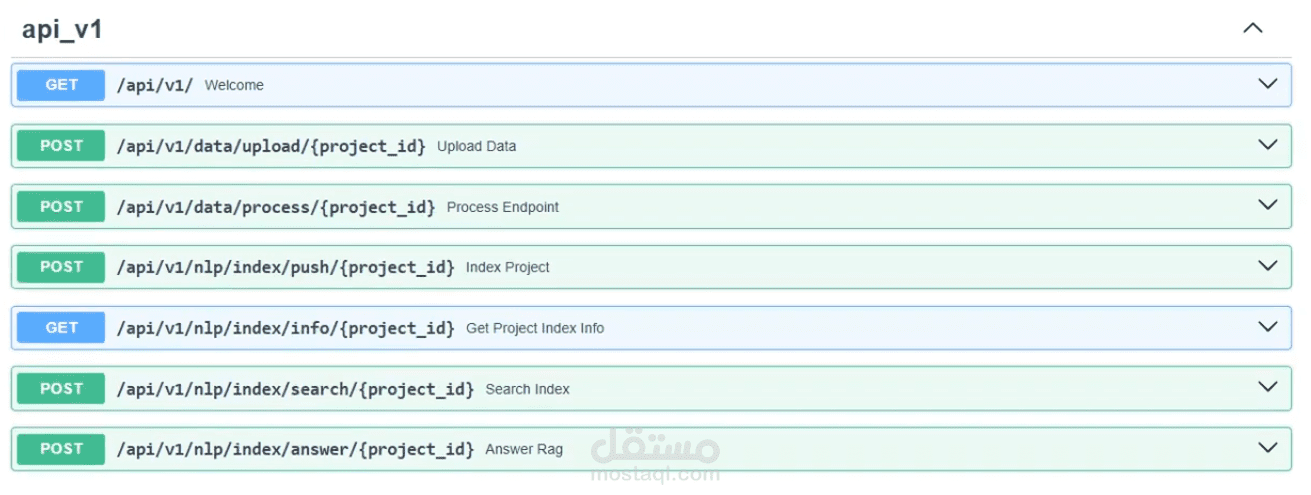

- FastAPI backend with clean MVC architecture and Postman-tested endpoints

- Fully containerized with Docker & Docker Compose, Nginx as reverse proxy

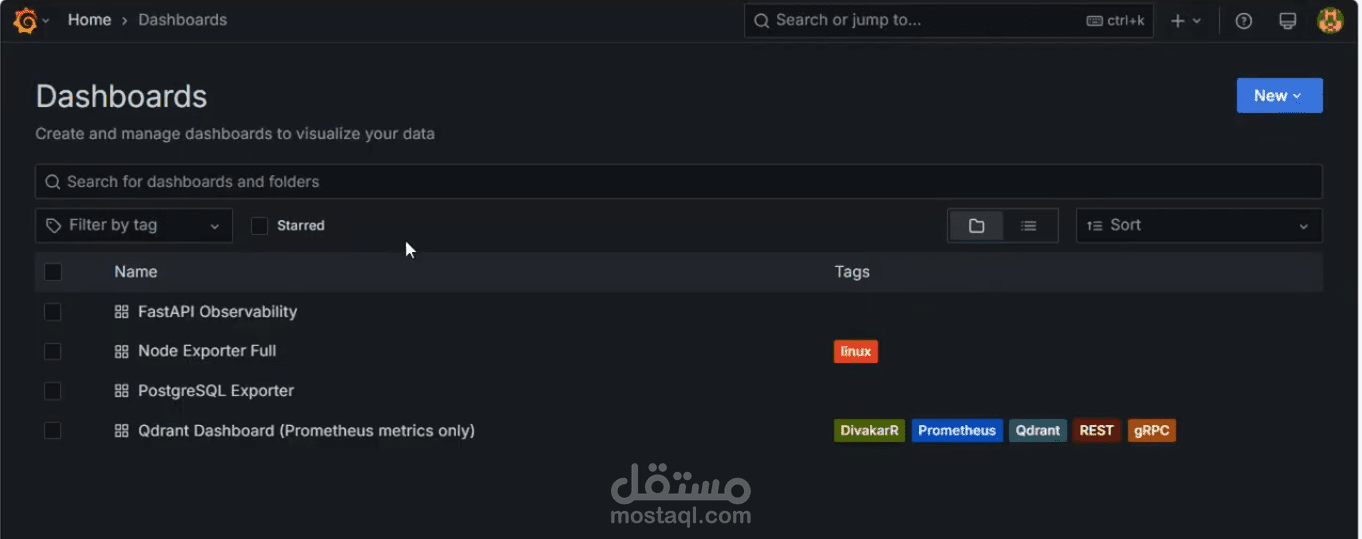

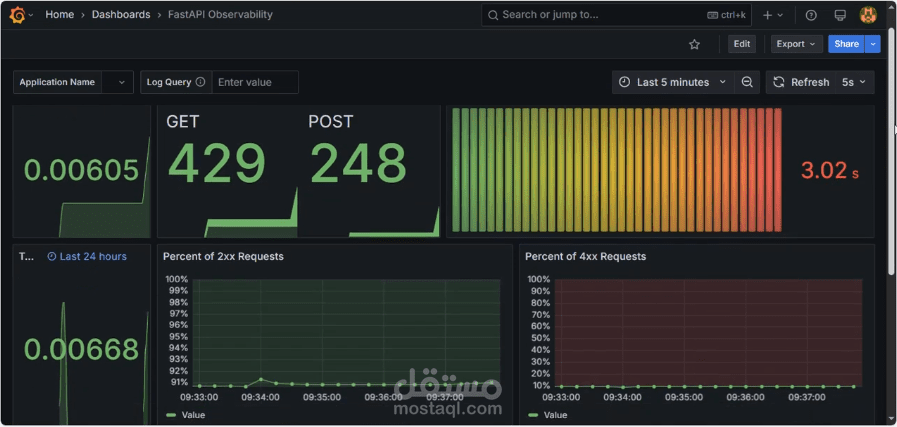

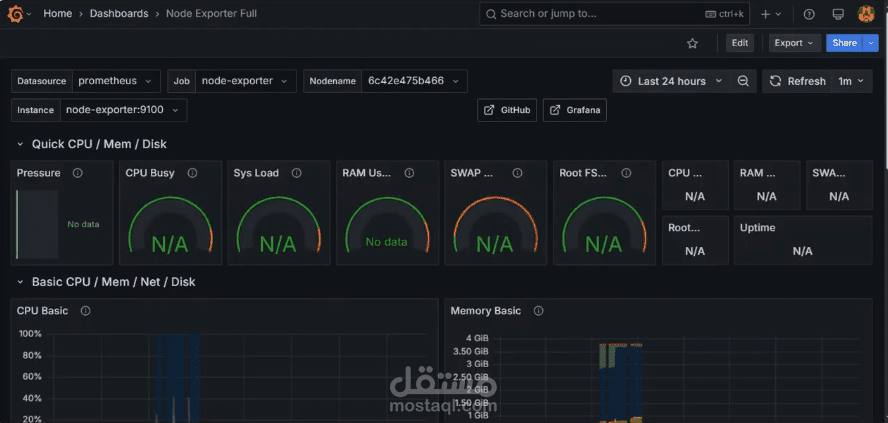

- Real-time monitoring dashboard using Prometheus & Grafana