Fraud detetction

تفاصيل العمل

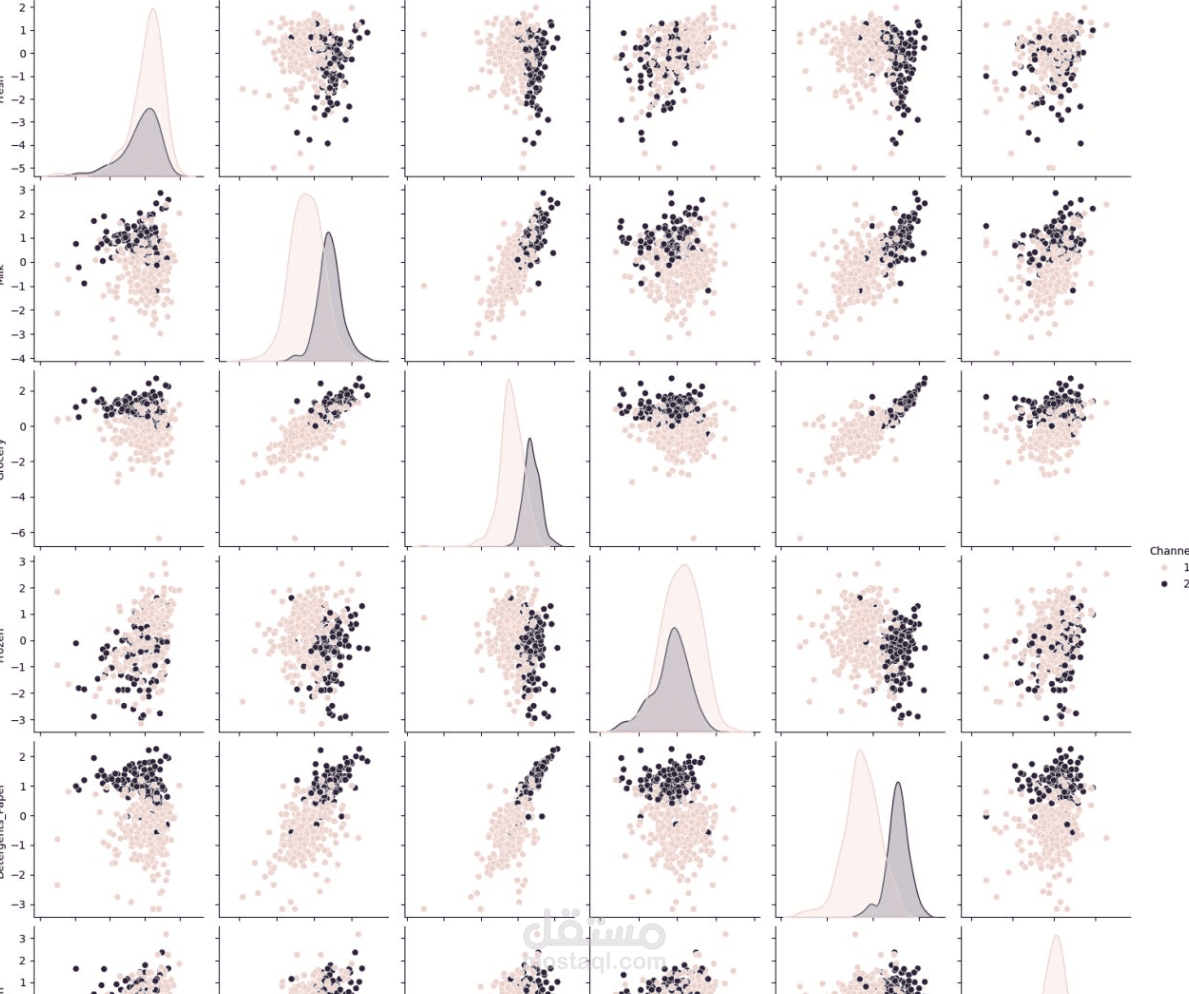

Data Processing: Cleaned and engineered features from large-scale transactional data using Python (Pandas, NumPy), handling extreme class imbalance using techniques like SMOTE (Synthetic Minority Over-sampling Technique).

Predictive Modeling: Trained and tuned multiple classification algorithms (e.g., Random Forest, XGBoost, Logistic Regression) using Scikit-Learn to isolate fraudulent activities with high precision and recall.

Performance Metrics: Evaluated model efficacy using Area Under the Precision-Recall Curve (AUPRC) and F1-score, prioritizing the minimization of false negatives to reduce financial risk.

Data Visualization: Developed an interactive dashboard using Plotly and Dash to provide real-time monitoring of transaction anomalies, risk scoring, and model performance metrics for non-technical stakeholders.