Multiple linear regression model

تفاصيل العمل

Multiple Linear Regression Model using Gradient Descent

This project implements a Multiple Linear Regression model trained using the Gradient Descent optimization algorithm in Python. The goal is to model the relationship between a dependent variable and multiple independent features.

---

Model Overview

Multiple Linear Regression assumes a linear relationship of the form:

y = w_0 + w_1* x_1 + w_2* x_2

Where:

y is the target variable

x1 , x2 are input features

w0 is the bias (intercept)

w1 , w2 are model weights

---

Training using Gradient Descent

Instead of using a closed-form solution, the model parameters are optimized using Gradient Descent, which iteratively minimizes the cost function.

Cost Function (Mean Squared Error):

J = 1/2m *sum (h - y)^2

Update Rule:

w := w - alpha * partial J/partial w

Where:

alpha is the learning rate

m is the number of training samples

---

Training Process

1. Initialize weights (w) with zeros

2. Compute predictions using current weights

3. Calculate error (difference between predicted and actual values)

4. Compute gradients for each parameter

5. Update parameters using gradient descent

6. Repeat for a fixed number of iterations or until convergence

---

Implementation Details (Python)

Built using NumPy for efficient numerical operations

Vectorized computations were used for performance optimization

Gradient Descent loop implemented manually (no high-level ML libraries)

---

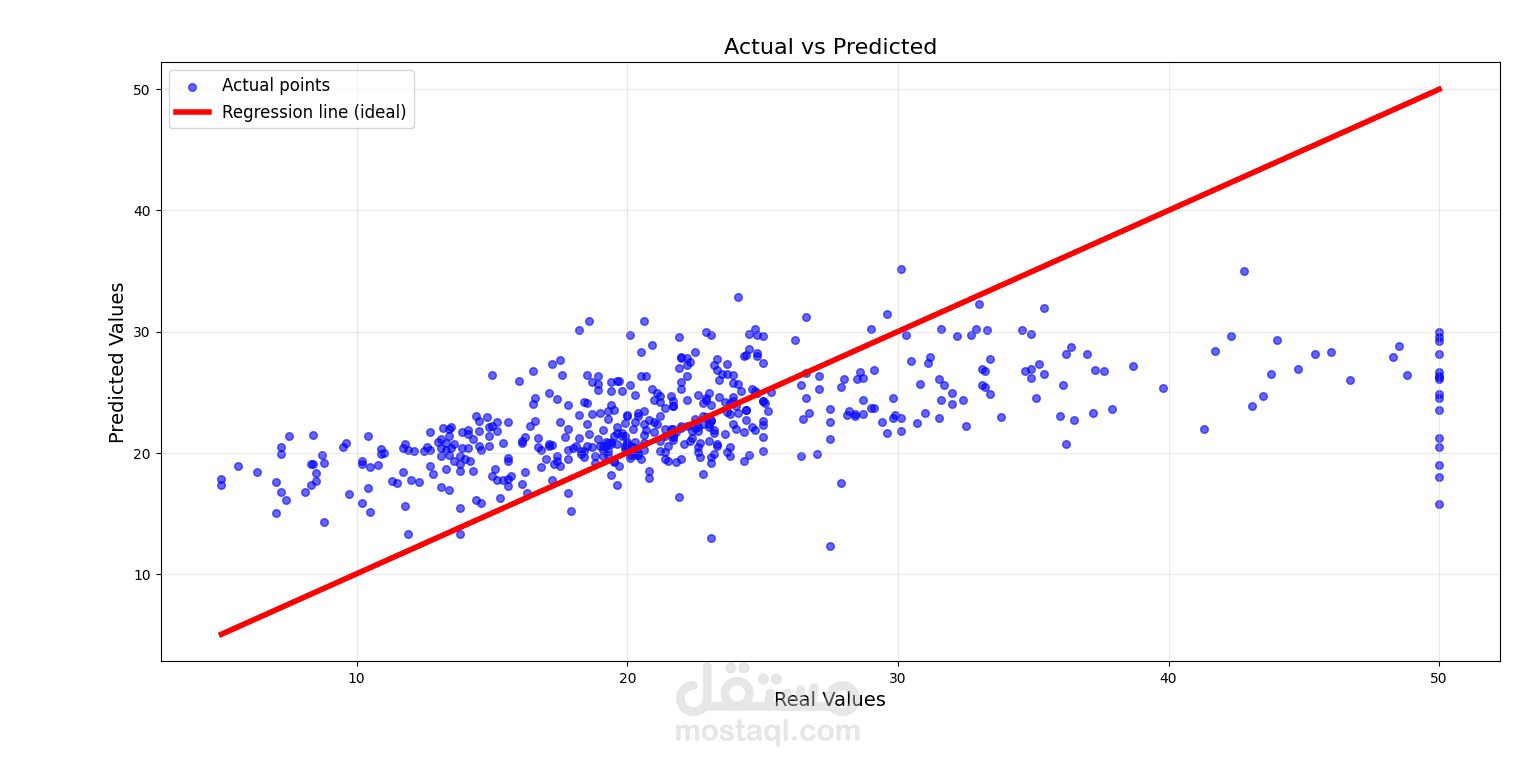

Model Evaluation

The model performance can be evaluated using:

Mean Squared Error (MSE)

Visualization of predicted vs actual values