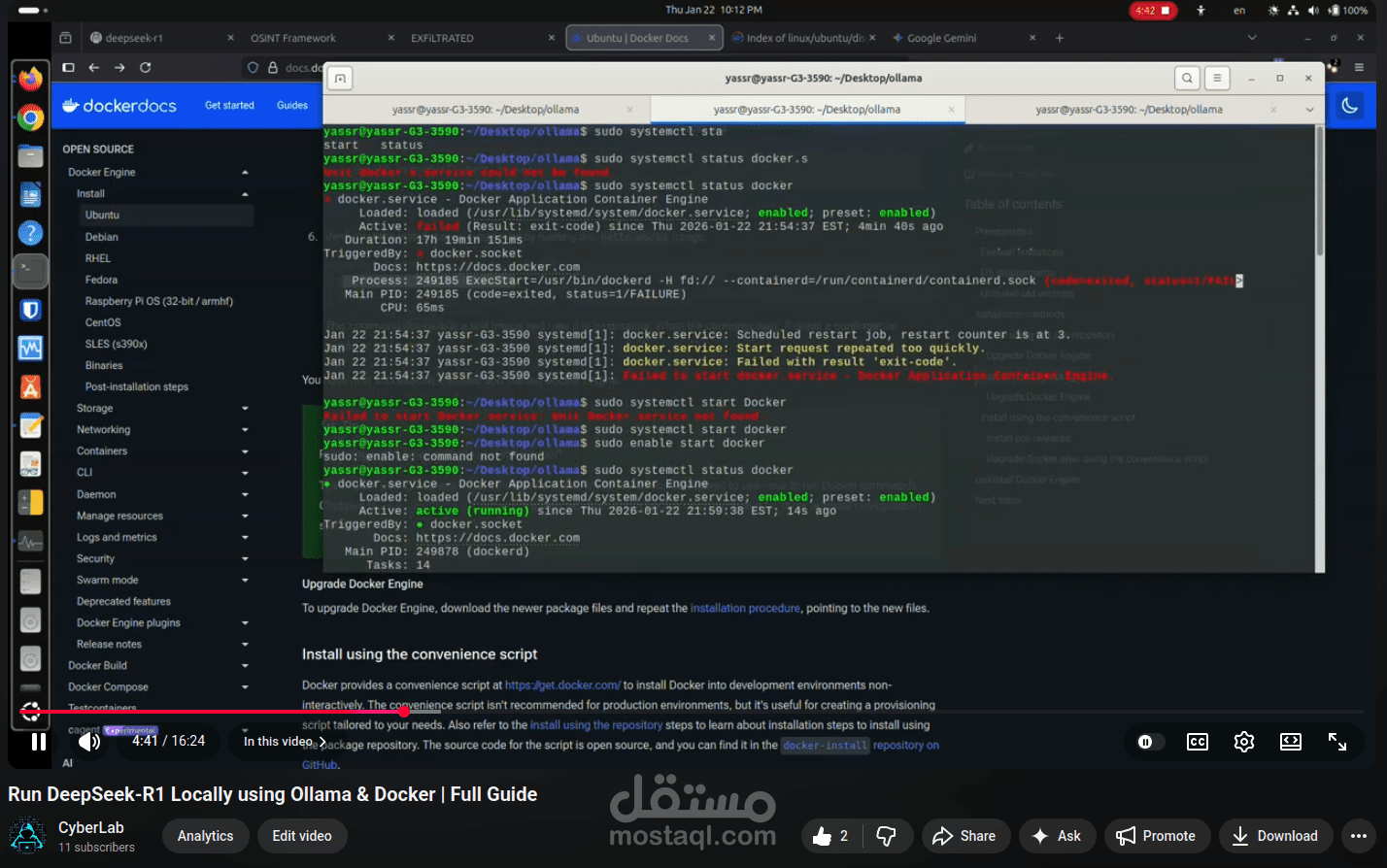

Run DeepSeek-R1 Locally using Ollama & Docker | Full Guide

تفاصيل العمل

? Hands-on Project | Local AI Deployment with Docker

I’ve just published a practical tutorial demonstrating how to run the DeepSeek-R1 model locally using Ollama and Docker on Linux.

As part of building my hands-on technical portfolio, I’ve implemented and documented a full local AI setup using Ollama and Docker to run the DeepSeek-R1 model on Linux.

This project demonstrates:

• Deploying LLMs locally (no cloud services)

• Using Docker for reproducible environments

• Working with Linux-based AI tooling

• Practical understanding of local infrastructure & AI workflows

? Project (video):

Feedback is welcome, and I’m always open to connecting with people working in AI, DevOps, or Security.