Arabic Sign Language Letters Classification using Deep Learning

تفاصيل العمل

I developed a Computer Vision system that recognizes Arabic Sign Language (ASL) letters from hand images using Deep Learning. The project aims to assist communication with deaf and hard-of-hearing individuals by automatically translating hand gestures into readable letters.

The model was built using a Convolutional Neural Network (CNN) from scratch (no transfer learning or pre-trained models) and achieved high classification performance on unseen data.

Key Features

•Designed and implemented a custom CNN architecture from scratch

•Classifies Arabic Sign Language letters from hand gesture images

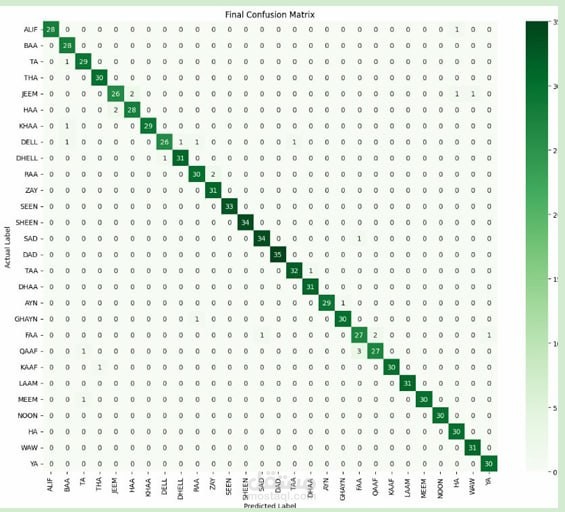

•Achieved 97.13% test accuracy

Model evaluated using:

•Confusion Matrix

•Accuracy and classification performance

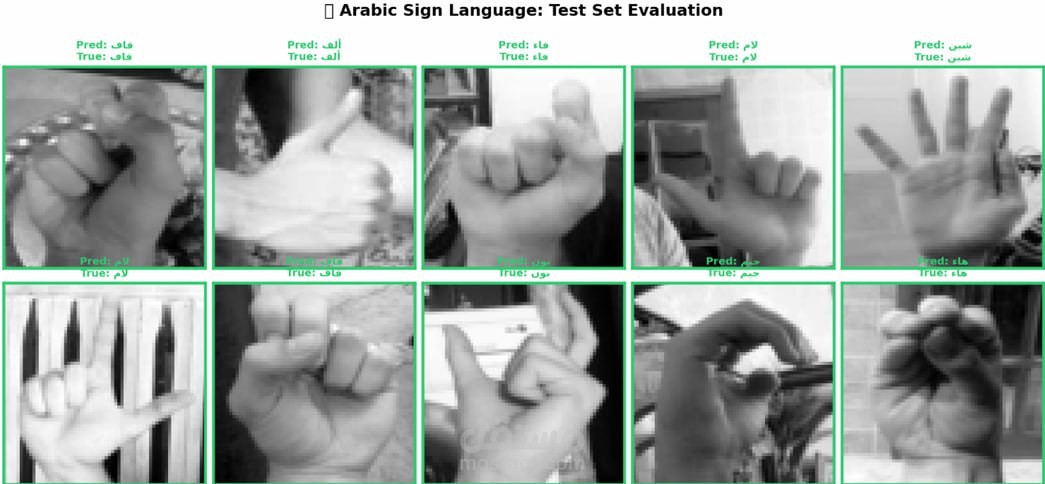

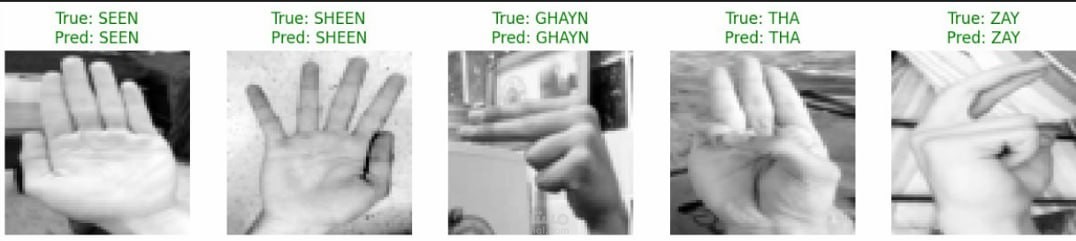

•Predictions on unseen/test images

•Handles visually similar hand gestures with high precision

•Applied image preprocessing and model optimization techniques