sign language translator

تفاصيل العمل

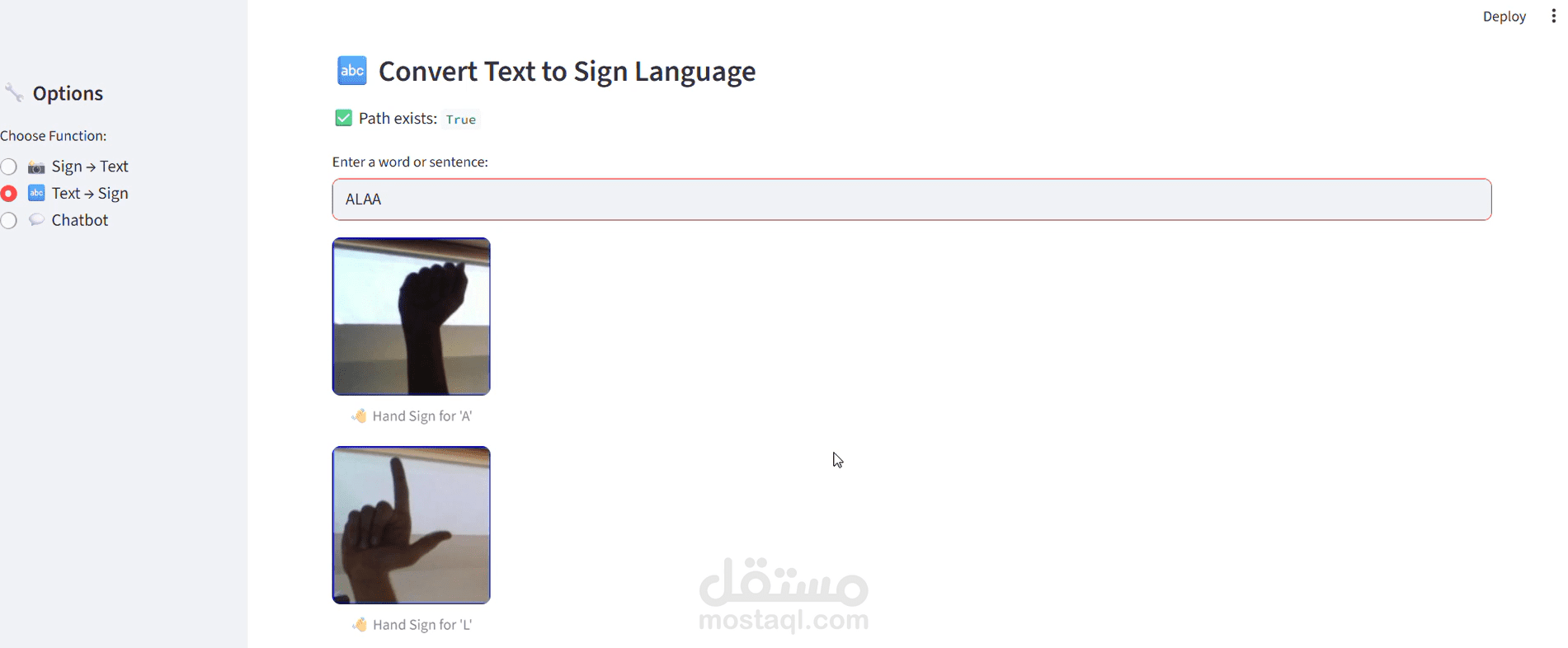

An end-to-end AI project that translates sign language gestures into readable text using Deep Learning.

The system was built using a Convolutional Neural Network (CNN) trained on labeled gesture image data. The model processes visual inputs, extracts meaningful features, and classifies hand signs into their corresponding characters with high accuracy.

The project follows a structured pipeline including data preprocessing, model training, evaluation, and performance optimization. The trained model was then deployed through an interactive web application built with Streamlit, enabling users to upload or capture gestures and receive real-time predictions.

This project demonstrates full-cycle AI development — from building and training the model to deploying a functional and user-friendly application.