Sign Language Recognition System

تفاصيل العمل

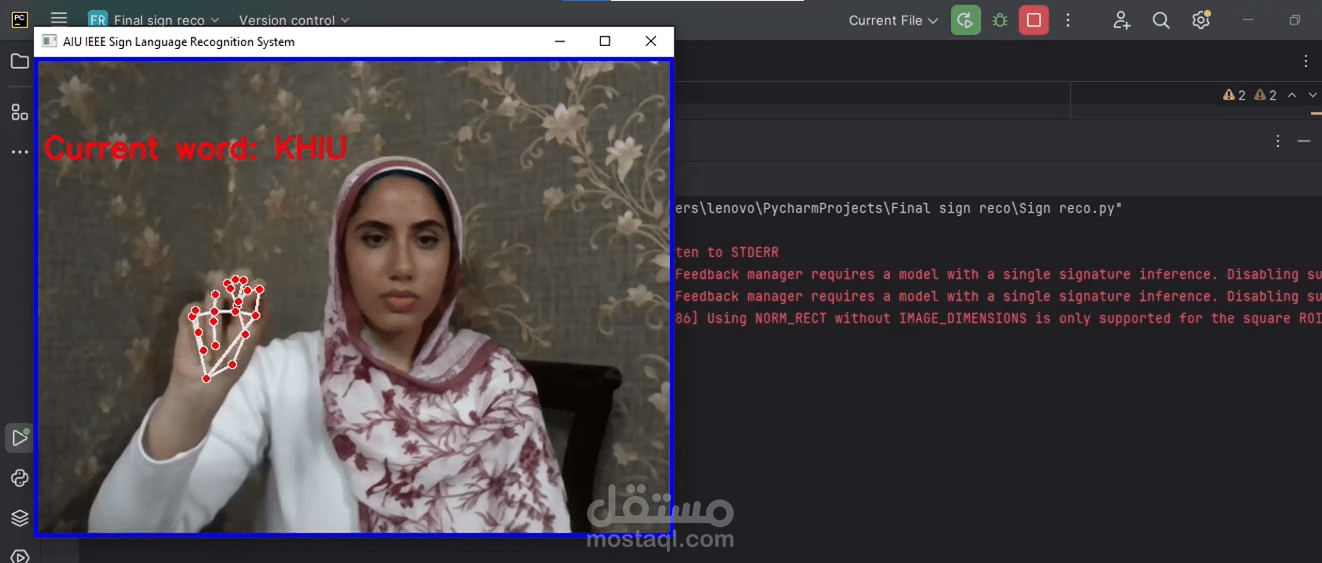

The Sign Language Recognition System is an advanced AI-driven solution designed to facilitate real-time interpretation of sign language using a standard webcam. Leveraging Machine Learning and Computer Vision, the system combines MediaPipe for accurate hand landmark tracking with Support Vector Machine (SVM) classification for reliable gesture recognition. Developed under the IEEE AIU Student Branch, this project demonstrates practical applications of AI in accessibility and communication technologies.

Key Features:

Real-Time Gesture Recognition: Detects hand signs and converts sequential predictions into coherent words.

High Accuracy: Trained on a structured dataset of over 1,500 samples across 26 classes, achieving 91% test accuracy.

Intelligent Feedback: Displays predicted letters with confidence scores, providing clear, actionable outputs.

Comprehensive Pipeline: Includes data preprocessing, model training, and live recognition scripts for seamless deployment.

Technical Overview:

Programming Language: Python

Machine Learning: Scikit-Learn (SVM)

Computer Vision: OpenCV, MediaPipe

Model Persistence: Pickle

Operational Workflow:

Training Phase: Extracts and normalizes 21 hand landmarks per gesture, trains SVM classifier with balanced weights, and saves the model.

Recognition Phase: Captures webcam frames, detects landmarks in real-time, predicts letters, dynamically forms words, and displays predictions with confidence scores.

This system provides a reliable, scalable solution for enhancing communication accessibility, educational tools, and AI-powered demonstrations, bridging the gap between sign language users and the broader community.