Uber-like System Data Pipeline Project

تفاصيل العمل

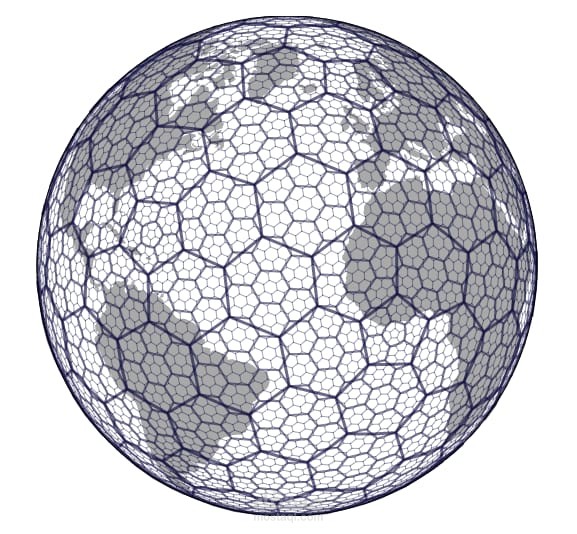

This project focuses on building a data processing pipeline for ride and booking data, starting from the initial ingestion of raw ride records (simulated as a streaming source) and moving through essential pre-processing steps. A key component of the pipeline is converting latitude and longitude values into efficient Geo-hash keys using geospatial indexing libraries such as H3, enabling scalable and accurate spatial analysis.

The project produces a structured staging table, Staging_Rides_Geo, which forms the foundation for the Fact_Trip model. The processing steps include calculating trip duration, generating geospatial keys for trip start and end points, and associating each record with the appropriate time dimension.

The pipeline is implemented using PySpark on HDFS to support large-scale data processing. Custom transformation logic is used to generate geo-hashes dynamically. A Hive DDL script is also included to define the output staging table in a structured, analytics-ready format.