e-commerce web scraping (python)

تفاصيل العمل

In the ever-evolving world of e-commerce, data is king. Recently, I embarked on a fascinating journey to scrape product data from Jumia, one of Africa's leading online marketplaces. Here's a detailed look into the process and the technologies that made it possible.

Technologies Used:

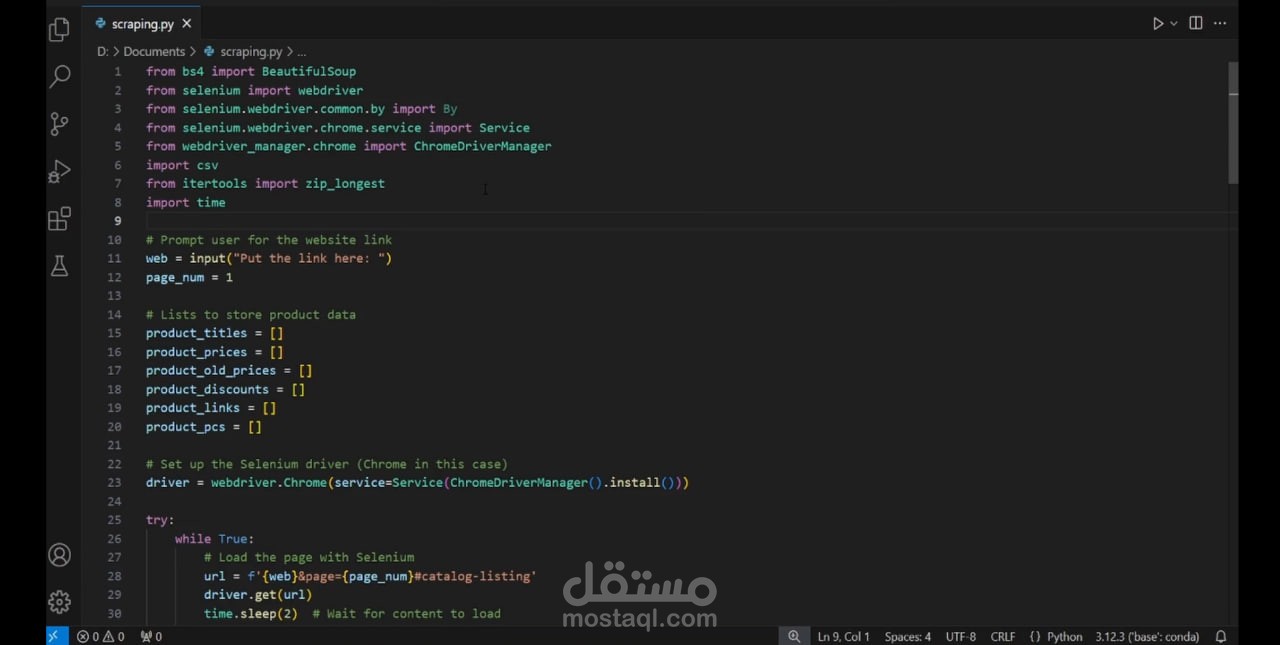

• Python: The backbone of our project.

• BeautifulSoup: For parsing HTML and XML documents.

• Selenium: To automate web browser interaction.

• CSV Module: For saving the scraped data.

• WebDriver Manager: To manage browser drivers seamlessly.

Project Overview:

The goal was to extract detailed product information, including titles, prices, discounts, pieces and links for multiple products.

1. User Input: Prompted the user to input the website link to scrape.

2. Data Storage: Initialized lists to store product data such as titles, prices, old prices, discounts, links and pcs.

3. Selenium Setup: Configured the Selenium WebDriver for Chrome to automate the browsing process.

4. Scraping Loop: Implemented a loop to continuously scrape data from each page until the user decides to stop.

5. Data Extraction: Used BeautifulSoup to parse the HTML content and extract product details.

6. Pagination Handling: Prompted the user for the next page number or to stop scraping.

7. Additional Details: Scraped further details from individual product links.

8. Data Export: Saved the collected data into a CSV file for further analysis.

? Key Challenges and Solutions:

• Dynamic Content Loading: Handled by adding delays (time.sleep) to ensure content is fully loaded before scraping.

• Error Handling: Implemented try-except blocks to manage missing data gracefully.

• Pagination: Allowed user input to navigate through multiple pages, ensuring comprehensive data collection.

• Scraping pcs: Made by using product links and scraping inner pages.

Impact and Applications:

This project not only enhanced my skills in web scraping and data handling but also provided valuable insights into the e-commerce landscape.

The data collected can be used for:

• Market Analysis: Understanding pricing strategies and discount patterns.

• Competitor Analysis: Comparing product offerings and pricing with competitors.

• Customer Insights: Identifying popular products and trends.

Conclusion:

Web scraping is a powerful tool for extracting valuable data from websites. This project is a testament to the potential of Python and its libraries in automating data collection and analysis. I'm excited to apply these insights to drive data-driven decisions in the e-commerce space.